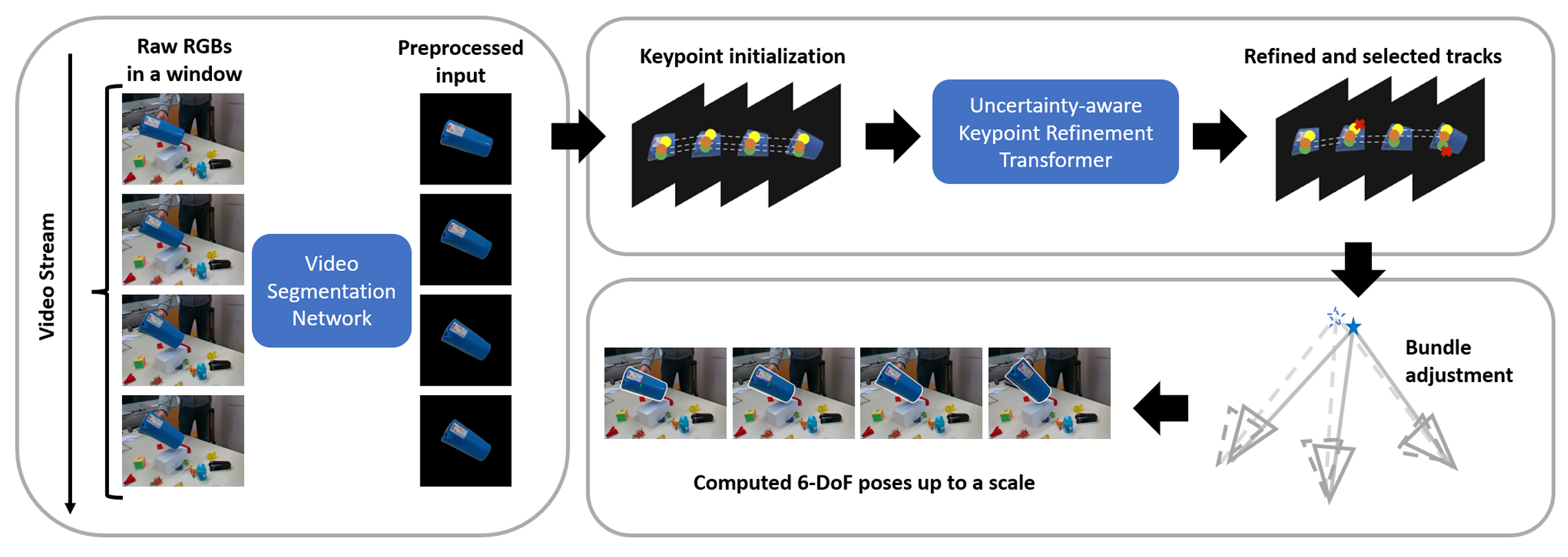

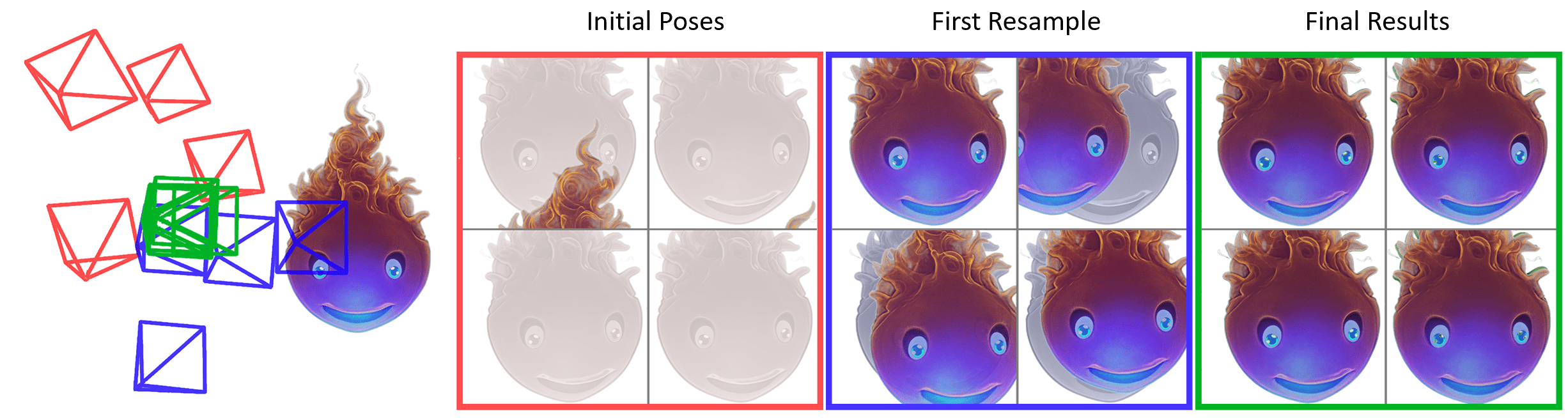

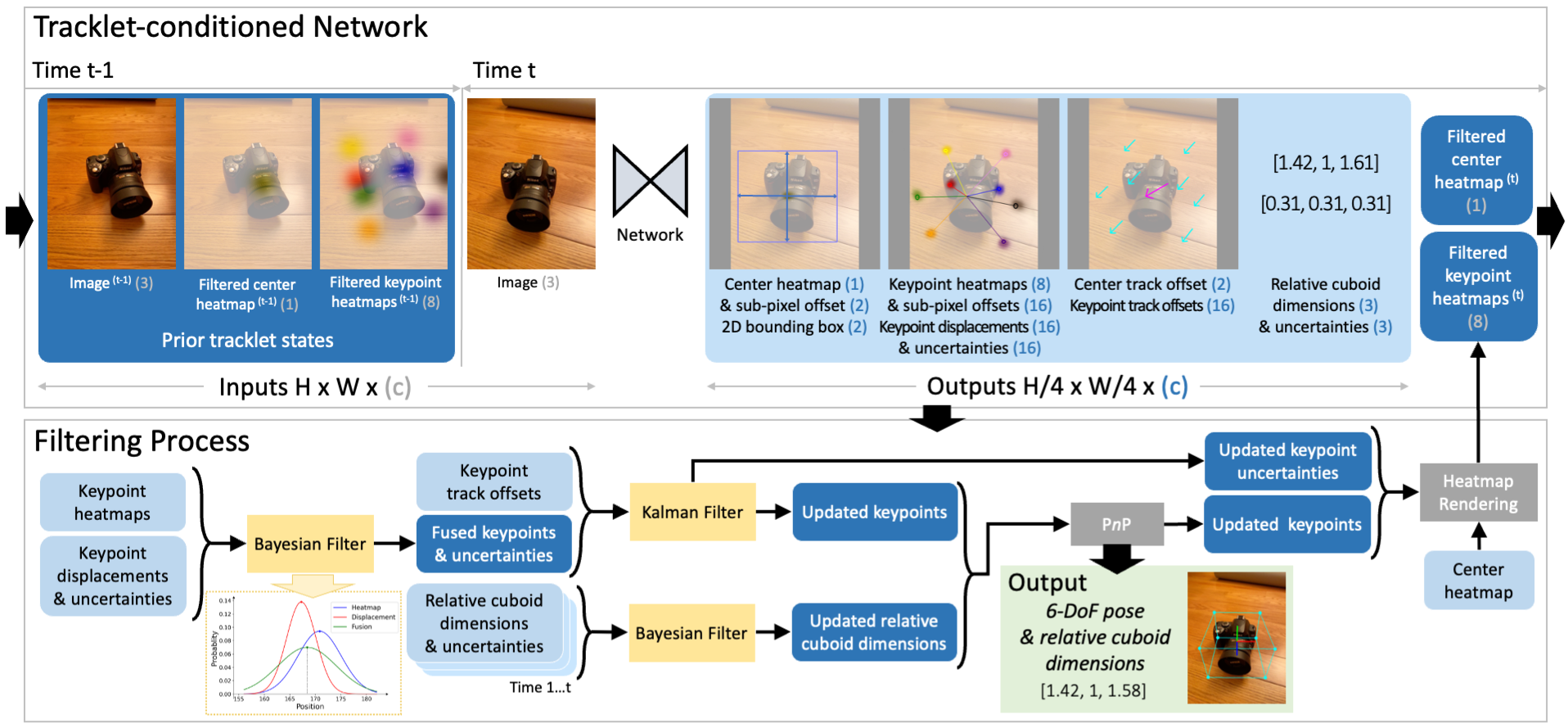

I work at the intersection of perception and decision-making — teaching models to understand what they're seeing well enough to act on it. In research, that meant 6-DoF object pose from a single image, pose tracking in dynamic scenes, NeRF-based pose optimization, and grounding natural-language commands in robot behavior. In industry, it means turning those ideas into video-understanding and recommendation models that run at billion-user scale.

Most of what I care about lives in the translation problem: the gap between a working research prototype and a reliable production system is usually larger than the gap between two papers. My work is shaped by moving between both sides — from a GPU cluster in a research lab to a ranking stack serving hundreds of millions of daily requests.

Full list on Google Scholar.